Recently I’ve been involved in the development of two separate web applications, both built with Apache MetaModel as the data access library. In this blog I want to share some experiences of good and bad things about this experience.

How we configure the webapp

Before we look at what went good and what went bad, let’s first go quickly through the anatomy of the web apps. Both of them have both a "primary" database and some "periphery" databases or files for storage of purpose-specific items. We use MetaModel to access both types of databases, but obviously the demands are quite different.

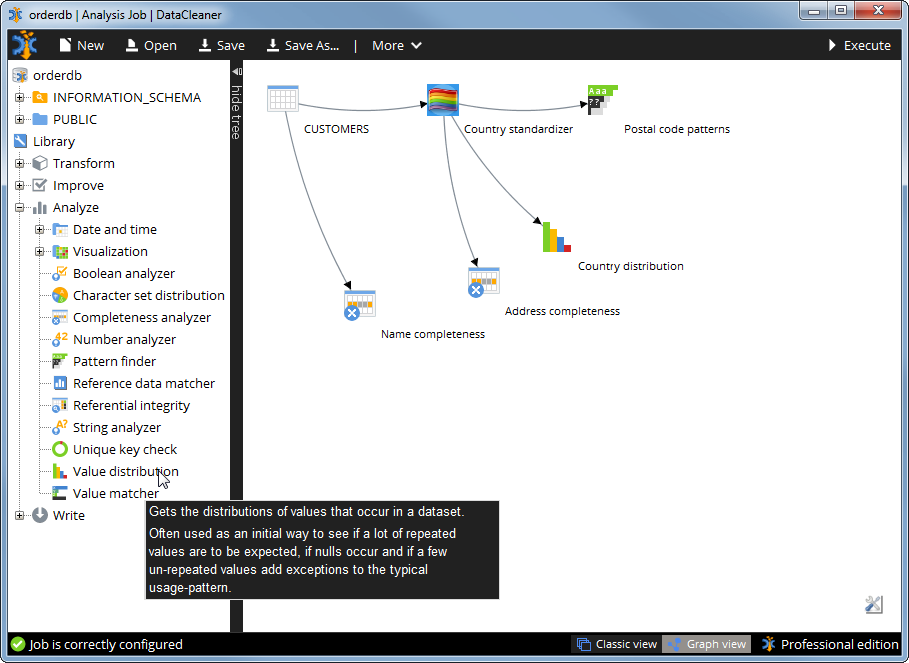

To make the term "periphery" database understandable, let’s take an example: In one of the applications the user submits data which may contain country names. We have large catalog of known synonyms for country names (like UK, Great Britain, United Kingdom, England etc.) but every once in a while we are unable to match some input – that input is stored in a periphery CSV file so that we can analyze it once in a while and figure out if the input was garbage or if we’re missing some synonyms. Similarly other periphery databases may be to monitor the success rate of A/B tests (usability experiments) or similar things. All of this data we do not want to put into the "primary" database since the life-cycle of the data is very different.

In the cases I’ve worked on, we’ve been using a RDBMS (PostgreSQL to be specific) as the "primary" database and CSV files, Salesforce.com and CouchDB as "periphery" databases.

The primary database is configured using Spring. One of the requirements we have is the ability to externalize all connection information, and we leverage Spring’s property placeholder for this:

<context:property-placeholder location="file:///${user.home}/datastore.properties" />

<bean class="org.apache.metamodel.spring.DataContextFactoryBean">

<property name="type" value="${datastore.type}" />

<property name="driverClassName" value="${datastore.driver}" />

<property name="url" value="${datastore.url}" />

<property name="username" value="${datastore.username}" />

<property name="password" value="${datastore.password}" />

</bean>

If you prefer, you can also configure a traditional java.sql.DataSource and inject it into this factory bean with the name ‘dataSource’. But with the approach above the 'type' of the datastore is even externalizable, meaning that we could potentially switch our web application’s primary database to MongoDB or something like that, just by changing the properties.

Implementing a backend component using MetaModel

When we need to get access to data, we simply inject that DataContext. If we also need to modify the data the sub-interface UpdateableDataContext is injected. Here’s an example:

@Component

public class UserDao {

private final UpdateableDataContext _dataContext;

@Autowired

public UserDao(UpdateableDataContext dataContext) {

_dataContext = dataContext;

}

public User getUserById(Number userId) {

DataSet ds = _dataContext.query()

.from("users")

.select("username", "name")

.where("id").eq(userId)

.execute();

try {

if (!ds.next()) {

return null;

}

Row row = ds.getRow();

return new User(userId, row.getValue(0), row.getValue(1));

} finally {

ds.close();

}

}

}

In reality we use a couple of String-constants and so on here, to avoid typos slipping into e.g. column names. One of the good parts here is that we’re completely in control of the query plan, the query building is type-safe and neat, and the DataContext object being injected is not tied to any particular backend. We can run this query in a test using a completely different type of backend without issues. More about testing in a jiffy.

Automatically creating the schema model

To make deployment as easy as possible, we also ensure that our application can automatically build the tables needed upon starting the application. In existing production environments the tables will already be there, but for new deployments and testing, this capability is great. We’ve implemented this as a spring bean that has a @PostConstruct method to do the bootstrapping of new DataContexts. Here’s how we could build a “users” table:

@Component

public class DatastoreBootstrap {

private final UpdateableDataContext _dataContext;

@Autowired

public UserDao(UpdateableDataContext dataContext) {

_dataContext = dataContext;

}

@PostConstruct

public void initialize() {

Schema schema = _dataContext.getDefaultSchema();

if (schema.getTable("users") == null) {

CreateTable createTable = new CreateTable(schema, “users”);

createTable.withColumn("id").ofType(ColumnType.INTEGER).asPrimaryKey();

createTable.withColumn("username").ofType(ColumnType.VARCHAR).ofSize(64);

createTable.withColumn("password_hash").ofType(ColumnType.VARCHAR).ofSize(64);

createTable.withColumn("name").ofType(ColumnType.VARCHAR).ofSize(128);

_dataContext.executeUpdate(createTable);

}

_dataContext.refreshSchemas();

}

}

So far we’ve demoed stuff that honestly can also be done by many many other persistence frameworks. But now it gets exciting, because in terms of testability I believe Apache MetaModel has something to offer which almost no other...

Testing your components

Using a PojoDataContext (a DataContext based on in-memory Java objects) we can bootstrap a virtual environment for our testcase that has an extremely low footprint compared to normal integration testing. Let me demonstrate how we can test our UserDao:

@Test

public void testGetUserById() {

// set up test environment.

// the test datacontext is an in-memory POJO datacontext

UpdateableDataContext dc = new PojoDataContext();

new DatastoreBootstrap(dc).initialize();

// insert a few user records for the test only.

Table usersTable = dc.getDefaultSchema().getTable("users");

dc.executeUpdate(new InsertInto(usersTable).value("id", 1233).value("name", "John Doe"));

dc.executeUpdate(new InsertInto(usersTable).value("id", 1234).value("name", "Jane Doe"));

// perform test operations and assertions.

UserDao userDao = new UserDao(dc);

User user = userDao.getUserById(1234);

assertEquals(1234, user.getId();

assertEquals("Jane Doe", user.getName());

}

This is in my opinion a real strength of MetaModel. First we insert some records (physically represented as Java objects) into our data context, and then we can test the querying and everything without even having a real database engine running.

Further evaluation

The examples above are of course just part of the experiences of building a few webapps on top of Apache MetaModel. I noted down a lot of stuff during the development, of which I can summarize in the following pros and cons list.

Pros:

- Testability with POJOs

- It’s also easy to facilitate integration testing or manual monkey testing using e.g. Apache Derby or the H2 database. We have a main method in our test-source that will launch our webapp and have it running within a second or so.

- We use the same API for different kinds of databases.

- When we do A/B testing, the metrics we are interested in changes a lot. So our results are stored in a CouchDB database instead, because of its dynamic schema nature.

- For certain unexpected scenarios or exceptional values, we store data for debugging and analytical needs in CSV files, also using MetaModel. Having stuff like that in files makes it easy to share with people who wants to manually inspect what is going on.

- Precise control over queries and update scripts (transaction) scope is a great benefit. Compared with many Object-Relational-Mapping (ORM) frameworks, this feels like returning home and not having to worry about cascading effects of your actions. You get what you ask for and nothing more.

- Concerns like SQL injection is already taken care of by MetaModel. Proper escaping and handling of difficult cases in query literals is not your concern.

Cons:

- At this point Apache MetaModel does not have any object mapping facilities at all. While we do not want a ORM framework, we could definitely use some basic "Row to Object" mapping to reduce boilerplate.

- There’s currently no API in MetaModel for altering tables. This means that we still have a few cases where we do this using plain SQL, which of course is not portable and therefore not as easily testable. But we can manage to isolate this to just a very few places in the code since altering tables is quite unusual.

- In one of our applications we have the unusual situation that one of the databases can be altered at runtime by a different application. Since MetaModel caches the schema structure of a DataContext, such a change is not automatically reflected in our code. We can call DataContext.refreshSchemas() to flush our cached model, but obviously that needs to happen intelligently. This is only a concern for database that have tables altered at runtime though (which is in my opinion quite rare).

I hope this may be useful for you to evaluate the library. If you have questions or remarks, or just feel like getting closer to the Apache MetaModel project, I strongly encourage you to join our dev mailing list and raise all your ideas, concerns and questions!